Tech Blog

Here I'll be sharing insights from my professional experiences and studies in data science and web development. There'll be plenty of Wagtail, Django and Python, a bit of JavaScript and CSS thrown in, and more on data science & engineering. There might even be a bit of time for some project management and business analysis too.

I'll also provide insights into how this site was made, as well as code examples and thoughts on how those could be further developed.

Feel free to leave questions or comments at the bottom of each post - I just ask people to create an account to filter out the spammers. You won't receive any unsolicited communication or find your email sold to a marketing list.

Achieving Recursive Blocks in Wagtail Streamfield

It's not obvious from the Wagtail documentation that it is possible to build recursive blocks. Building on previous articles here, we can make use of the undocumented local_blocks attribute to build recursion into our streamfields. This article gives a working example using a simple file system analogy.

Create a Wagtail Code Block with highlightjs

This article guides you through creating a custom Wagtail block designed to display code with automatic syntax highlighting, using the highlightjs library. It covers key elements such as extending Wagtail’s default block types, customising the admin interface with CSS, and dynamically loading external libraries as needed. The block provides editors with options to collapse code, display custom titles, and preview the formatted code directly in the Wagtail admin interface.

Additionally, the article discusses structuring the block’s back-end and front-end components, ensuring proper interaction between the block form and the highlightjs library. It also explains the importance of responsive design and efficient UI/UX choices, helping editors easily work with code blocks while maintaining multilingual compatibility.

Additionally, the article discusses structuring the block’s back-end and front-end components, ensuring proper interaction between the block form and the highlightjs library. It also explains the importance of responsive design and efficient UI/UX choices, helping editors easily work with code blocks while maintaining multilingual compatibility.

Loading CSS and Javascript On Demand in a CMS Environment

In modern web development, particularly within a CMS environment, the efficiency of resource management is paramount. Loading JavaScript and CSS libraries only when they are associated with a specific block or component offers significant advantages. This approach not only enhances performance by reducing the overall page load time but also optimises the user experience by minimising unnecessary code execution.

This article demonstrates one strategy for implementing on-demand loading of JavaScript and CSS in a CMS context, thereby enhancing performance and maintainability.

This article demonstrates one strategy for implementing on-demand loading of JavaScript and CSS in a CMS context, thereby enhancing performance and maintainability.

Creating Conditional Logic in CSS

There are times that the ability to use conditional statements in CSS would be really handy. CSS lacks any if/else type clauses to enable this, but there is the possibility to arrive at a binary value by combining calc with min & max statements and use the outcome of that to 'decide' what rule to apply.

Upgrade PostgreSQL Server: A Simplified Guide for Windows and Ubuntu

Upgrading PostgreSQL server binaries and clusters is critical for ensuring the performance, security, and stability of your database.

This guide will equip you with the knowledge and confidence to efficiently upgrade your PostgreSQL server without wading through pages of dense documentation.

This guide will equip you with the knowledge and confidence to efficiently upgrade your PostgreSQL server without wading through pages of dense documentation.

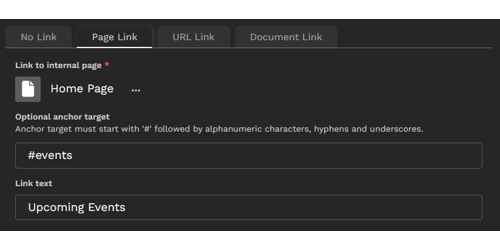

Build an Intuitive Link StructBlock in Wagtail: Simplifying Link Management for Content Editors

Learn how to create a reusable StructBlock in Wagtail that simplifies the management of different link types.

Develop an intuitive, compact, and interactive tabbed interface that empowers content editors to seamlessly select link type, path, and text, all while optimizing page space within the editing interface. Implement a common method to retrieve URL and text for the link regardless of link type, including custom link types.

We'll cover concepts essential for achieving our goal, such as transforming Django's radio select widget into a tab group, constructing an interactive panel interface within the StructBlock using a custom Telepath class, dynamically adding child blocks based on initial parameters, integrating data attributes into block forms, custom StructValue classes and designing adaptable choice blocks.

Develop an intuitive, compact, and interactive tabbed interface that empowers content editors to seamlessly select link type, path, and text, all while optimizing page space within the editing interface. Implement a common method to retrieve URL and text for the link regardless of link type, including custom link types.

We'll cover concepts essential for achieving our goal, such as transforming Django's radio select widget into a tab group, constructing an interactive panel interface within the StructBlock using a custom Telepath class, dynamically adding child blocks based on initial parameters, integrating data attributes into block forms, custom StructValue classes and designing adaptable choice blocks.

Wagtail Streamfields - Propagating the `required` Attribute on Nested Blocks

When adding blocks to a custom Wagtail StreamField StructBlock, whether or not those blocks are required may depend on the required status set in the StructBlock constructor kwargs.

Unlike Django form fields however, in Wagtail's Block class (from which all blocks derive), the required attribute is a read-only property. This means it can only be set in the class declaration rather than during instantiation.

This article presents a straightforward workaround, eliminating the necessity for extensive validation code in each affected block.

Unlike Django form fields however, in Wagtail's Block class (from which all blocks derive), the required attribute is a read-only property. This means it can only be set in the class declaration rather than during instantiation.

This article presents a straightforward workaround, eliminating the necessity for extensive validation code in each affected block.

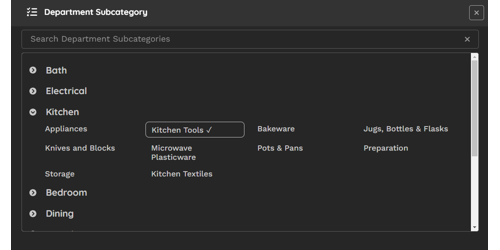

Efficient Cascading Choices in Wagtail Admin: A Smart Chooser Panel Solution

In the Wagtail admin interface, handling cascading or dependent selections can be a challenge due to the absence of active controls. This tutorial unveils a practical solution by guiding you through the creation of a custom 'chooser' panel. This panel not only elegantly displays dependent elements grouped by their parent element, focusing on categories and subcategories, but also incorporates an intuitive partial match filter for swift and efficient selections.

Learn how to implement this custom chooser panel, providing a one-click method to effortlessly manage cascading selections, enhancing your Wagtail experience.

Learn how to implement this custom chooser panel, providing a one-click method to effortlessly manage cascading selections, enhancing your Wagtail experience.

Protecting Your Django Forms: Implementing Google reCAPTCHA V3 for Enhanced Security

Learn how to fortify your Django and Wagtail forms against spam and bots using Google reCAPTCHA V3. Discover seamless integration methods, explore the hCAPTCHA alternative, and enhance user experience while maintaining robust security.

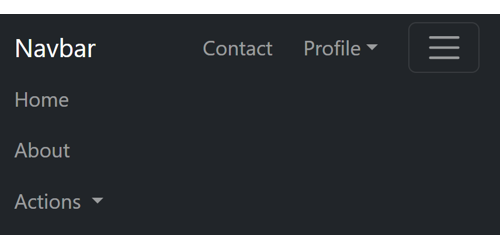

Crafting a Bootstrap Navbar: How to Create Sticky Items for Persistent Visibility in Collapsed Mode

Explore the art of designing a Bootstrap navbar that goes beyond conventional boundaries. In this guide, discover the secrets to creating sticky items that remain visible even in collapsed mode. Elevate your web design with seamless navigation that ensures key elements are always at your users' fingertips.

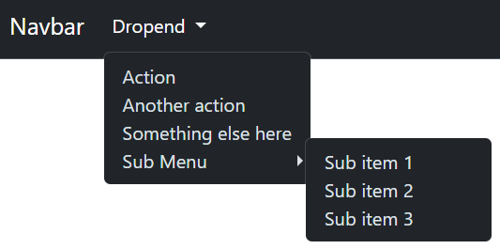

Unlocking Enhanced Navigation with Bootstrap: A Guide to Submenus in Dropdown Menus

Bootstrap has a notable feature gap: it doesn't provide a native component for nesting dropdown buttons. In this article, we'll delve into the art of crafting submenus within Bootstrap dropdown menus. You'll gain insights into seamlessly incorporating them into navbar dropdown menus, handling both collapsed and expanded views. Elevate your web navigation with Bootstrap submenus!

Creating Custom Django Form Widgets with Responsive Front-End Behaviour

This article will take you through the process of creating custom Django form widgets with responsive front-end behaviour. Create a custom Django template and learn how augment an existing widget with additional HTML and embedded JavaScript. For Wagtail users, learn how to seamlessly integrate your custom widget into FieldPanels and StructBlocks.